Our relationship with security

Security is a compromise between power and protection. The operating system you use should empower you to do things that your ancestors could only dream of while at the same time protecting your privacy and consent. If the operating system tries to protect you by reducing your power then the risk is that you will remove protections to allow yourself more power. The danger is that you cannot provide informed consent if you are not informed of the risk, you can never be fully informed of even most risks without devoting a significant amount of time to the study of security and so the operating system must try and sell you the power while subtly enforcing its protection.

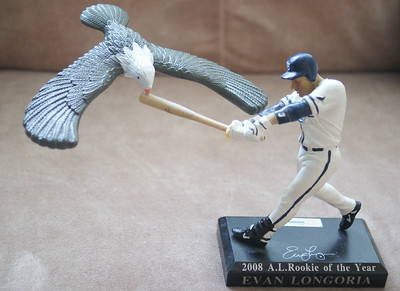

We need to be aware of our relationship with security, we don’t want unnecessary risk but we also don’t want to live an unfulfilling life. We need to find the balance but it’s too complex to have a definite middle ground. The balance we strike may look more like the eagle you can balance on your finger but most systems are not built like this, this toy exists as a novelty but it also demonstrates the power available to us through engineering.

It’s in the interests of those who make software to make the experience of using that software something that we wish to repeat and recommend. In many instances, this ensures that the owners of such software will invest in the security of the system but they will never invest in a system that reduces the amount of money that is made through the software. Unless we can control how we behave by not repeating our use of the software and recommending against its use we have no power over the interests of those who own the software products we use. This does not mean feeling guilty for using google’s software or make others feel guilty, I think instead we must treat our minds as part of the attack surface.

You can imagine an attack surface like the size of a target that is facing an archer, if it turns to the side it’s harder for the archer to hit the target because less surface faces it. Before we developed democracy you could say that people were tricked into feudalism. Some dangerous people could hurt you and you being a farmer weren’t skilled at killing people but if you swore an oath to those who had those skills you could be protected from arbitrary violence. The flaw in the system was that those who were skilled at violence achieved great power over those that swore loyalty and would cause great suffering to satisfy their whims, after all, if you think about it, what kind of person typically becomes skilled at violence? Of course, many people knew that this would happen, many people understood how much pain was going to be caused but it was less than the pain that existed before the system emerged. Most people didn’t know what was going to happen though, they just saw what was happening at the time. The Taliban took over Afghanistan when after kicking the Soviets out of their country those who defeated them couldn’t agree on who would rule the country. The Taliban were heavily populated with orphaned children of the war who had grown up on the Pakistan side of the border with Saudi-funded religious groups teaching them how to be the kind of men who acted with virtue and against vice. People welcomed the Taliban because when you live in a country where it is normal for young children to be missing limbs you will welcome any form of stability.

The same thing occurs with good as well as bad, when we automated industries we neglect the harm that is caused through progress. Yesterday it was more expensive to make food, more labor was involved, today we have automated that, but what about the people who aren’t able to reskill. The same is true of the information age where we are achieving things unimaginable even 30 years ago but we all know people who aren’t able to keep up. I don’t want to put the breaks on, to smash the looms, to bring down our achievements, far from it but I want us to examine what we are doing so that we are aware of which direction we are going in before we decide on our next move.

Today the incentive for software providers is to ensure our engagement and trying to demand that software is designed in our interest by whether we choose to engage is not going to work because we do not have the luxury of infinite choice, the software that exists is software that is supported by the system we live in with all the flaws that come with that. If we want to create a different approach to software we must create a different system. To destroy our previous system we must first invent a better one that allows a smooth transition. The transition must not punish those who participate by denying them the joy of the previous system, or if it does it must be heavily compensated.

At the same time, we are seeing from attacks like Stuxnet, solar winds, TikTok, and NSA dragnet surveillance that security and privacy are both becoming serious geopolitical issues. As states target each other through supply chain attacks this serves to weaken the same security we all rely on, and as data collection becomes weaponized we are being turned into pawns in a game that no one should be playing.

All hope is not lost, there are solutions to these problems, the most innovative solutions are coming from open source projects, I’m planning to deep dive into each of the tools I think will help me improve my security and post about my journey here. I hope that the work as I explore will make it easier for others to follow. I’ll post what I find, what I had to sacrifice to use each piece of software, and what I got in return. Hit me up if you find it helpful!